NVIDIA DGX SuperPOD with H100 systems provides leadership-class AI infrastructure designed for the most challenging training and inference workloads. It is a full-stack data center platform that integrates high-performance computing, storage, networking, and software management to deliver maximum performance at scale.

* Turnkey AI supercomputer architecture optimized for generative AI and large language models (LLMs).

* Scalable design supporting clusters of NVIDIA H100 Tensor Core GPUs.

* Integrated NVIDIA InfiniBand networking for high-bandwidth, low-latency communication.

* Includes NVIDIA AI Enterprise software suite for end-to-end AI development and deployment.

* Features NVIDIA Mission Control for streamlined infrastructure management and operations.

* Validated storage configurations from leading partners for high-throughput data access.

* Purpose-built for trillion-parameter model training and massive-scale inference.

* Comprehensive support and services from NVIDIA AI experts throughout the lifecycle.

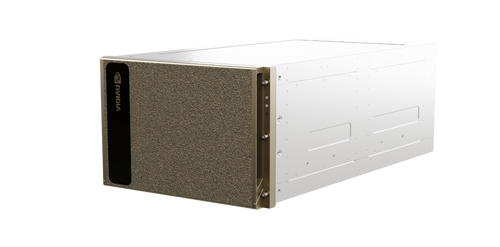

* High-density compute nodes featuring 8x NVIDIA H100 GPUs per system.

* Advanced thermal management and power distribution for enterprise-grade reliability.

Related Products

A relatable product