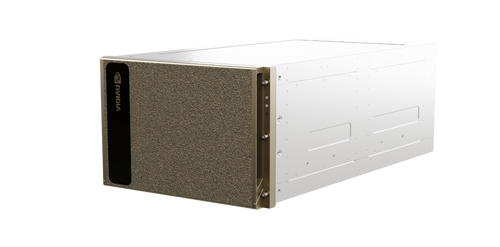

The NVIDIA DGX SuperPOD with GB200 systems is a leadership-class, liquid-cooled AI infrastructure designed for the most challenging generative AI training and inference workloads. It provides a turnkey, full-stack data center platform that integrates high-performance compute, storage, networking, and software management to deliver maximum performance at scale.

- Powered by NVIDIA Grace Blackwell Superchips for massive-scale generative AI training and inference.

- Liquid-cooled rack-scale architecture optimized for high-density compute and energy efficiency.

- Scalable to tens of thousands of GPUs to tackle trillion-parameter generative AI models.

- Integrated NVIDIA BlueField-3 DPUs for offloading, accelerating, and isolating networking and security tasks.

- High-speed interconnectivity via NVIDIA ConnectX-7 or ConnectX-8 InfiniBand and Ethernet adapters.

- Includes NVIDIA AI Enterprise software suite for end-to-end AI development and deployment.

- Managed through NVIDIA Mission Control for streamlined operations and infrastructure resiliency.

- Turnkey deployment with validated high-performance storage and networking configurations.

- Purpose-built for AI factories, providing a ready-to-run environment for enterprise supercomputing.

- Optimized for high-throughput, low-latency communication across the entire cluster fabric.

Related Products

A relatable product