The NVIDIA DGX H200 is the world’s premier AI supercomputing platform, designed to handle the most complex generative AI and large language model (LLM) workloads. It integrates NVIDIA H200 Tensor Core GPUs with high-speed networking and a comprehensive software stack to deliver unprecedented performance and scalability.

- Powered by NVIDIA H200 Tensor Core GPUs with advanced HBM3e memory.

- Delivers massive memory bandwidth and capacity for large-scale AI training and inference.

- Integrated with NVIDIA NVLink and NVSwitch for high-speed GPU-to-GPU communication.

- Includes NVIDIA BlueField-3 DPUs for offloading networking, storage, and security tasks.

- Optimized for generative AI, large language models (LLMs), and high-performance computing (HPC).

- Full-stack solution including NVIDIA AI Enterprise software and DGX OS.

- Scalable architecture from a single DGX system to massive DGX SuperPOD clusters.

- Enterprise-grade reliability with 24/7 support and proactive monitoring.

- High-efficiency power and cooling design for modern data center environments.

NVIDIA

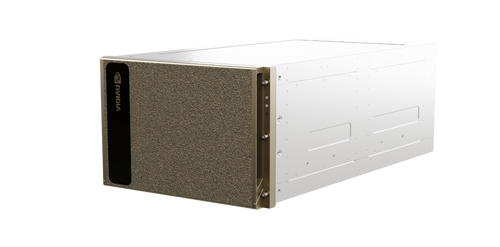

NVIDIA - DGX H200 AI Server Platform - AI Supercomputing Infrastructure

For a quote, contact us at info@tropical.com

- SKU:

- DGX H200 AI Server Platform

- Weight:

- 287.00 LBS

- Width:

- 19.00 (in)

- Height:

- 14.00 (in)

- Depth:

- 35.00 (in)

Related Products

A relatable product