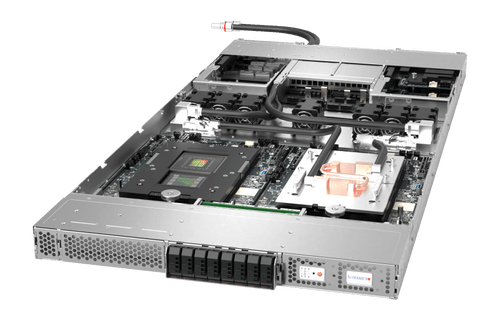

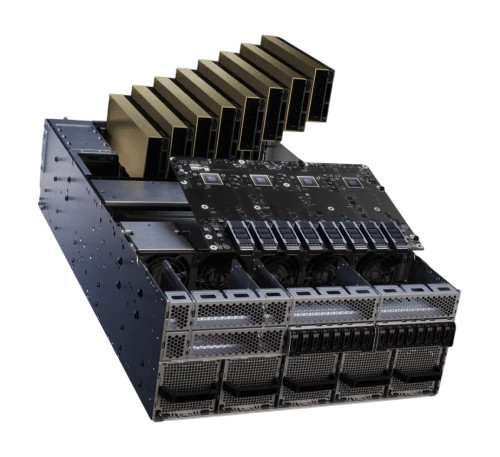

The HPE MGX-based AI server is a modular, high-density compute platform designed to accelerate AI model training and inference workloads. Built on the NVIDIA MGX architecture, it provides a flexible foundation for generative AI, large language models (LLMs), and data-intensive scientific computing.

- Modular NVIDIA MGX architecture for rapid deployment and future-proof scalability.

- Optimized for high-performance AI training, fine-tuning, and inference at scale.

- Supports latest GPU accelerators for maximum parallel processing throughput.

- Integrated HPE iLO Silicon Root of Trust for hardware-level security and firmware protection.

- High-bandwidth interconnects to minimize latency in distributed AI clusters.

- Advanced thermal management designed for high-density rack environments.

- Seamless integration with HPE GreenLake for cloud-like operational flexibility.

- Compatible with HPE Compute Ops Management for centralized lifecycle orchestration.

- Engineered for mission-critical reliability in hybrid and private cloud AI factories.

HPE

HPE - MGX-based AI Server - High-Performance Modular AI Compute Platform

For a quote, contact us at info@tropical.com

- SKU:

- HPE MGX-based AI Server

- Weight:

- 85.00 LBS

- Width:

- 17.50 (in)

- Height:

- 7.00 (in)

- Depth:

- 32.00 (in)

Related Products

A relatable product