-

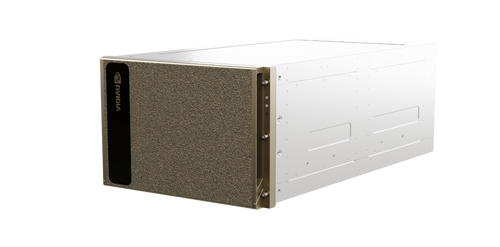

NVIDIA - DGX GB200 NVL72 - Rack-Scale Liquid-Cooled AI Supercomputer

The NVIDIA GB200 NVL72 is a rack-scale, liquid-cooled exascale computer designed for real-time trillion-parameter large language model (LLM) inference and massive-scale AI training. It integrates 36 Grace CPUs and 72 Blackwell GPUs into a single NVLink... -

NVIDIA - DGX SuperPOD (H200) - AI Supercomputing Infrastructure

NVIDIA DGX SuperPOD with H200 systems is a leadership-class AI infrastructure designed for the most challenging AI training and inference workloads. It provides a turnkey, full-stack data center platform that integrates high-performance compute, storage,... -

NVIDIA - DGX SuperPOD (B200) - AI Infrastructure Platform

NVIDIA DGX SuperPOD with B200 systems is a turnkey AI data center solution designed for organizations building AI factories. It provides a full-stack platform that integrates high-performance computing, storage, networking, and software management to... -

NVIDIA - DGX SuperPOD (H100) - Scalable AI Supercomputing Infrastructure

NVIDIA DGX SuperPOD with H100 systems provides leadership-class AI infrastructure designed for the most challenging training and inference workloads. It is a full-stack data center platform that integrates high-performance computing, storage, networking,... -

NVIDIA - DGX SuperPOD (A100) - AI Data Center Infrastructure

The NVIDIA DGX SuperPOD is a turnkey AI data center solution designed to provide leadership-class infrastructure for the most challenging AI training and inference workloads. It integrates high-performance compute, networking, storage, and software into... -

NVIDIA - DGX Station (H100) - AI Development Workstation

The NVIDIA DGX Station (H100) is a premier AI supercomputer in a workstation form factor, designed for data science teams and researchers working in office environments. It delivers data center-class performance without the need for specialized power or... -

NVIDIA - DGX BasePOD (GB200) - Blackwell AI Infrastructure Foundation

NVIDIA DGX BasePOD is a validated reference architecture designed to simplify the deployment and scaling of enterprise AI infrastructure. Built with NVIDIA Blackwell GB200 systems, it provides a high-performance foundation for training large language...USD0.00 -

NVIDIA - DGX BasePOD (B200) - Blackwell-Powered AI Infrastructure System

The NVIDIA DGX BasePOD (B200) provides a proven reference architecture for building and scaling enterprise AI infrastructure. Built on the NVIDIA Blackwell architecture, this unified system accelerates the entire AI pipeline from training and fine-tuning... -

NVIDIA - DGX BasePOD (H200) - Enterprise AI Infrastructure Reference Architecture

The NVIDIA DGX BasePOD (H200) is a proven reference architecture designed to scale AI infrastructure for the enterprise, providing a foundation for building AI Centers of Excellence. It integrates high-performance NVIDIA DGX H200 systems with certified... -

NVIDIA - DGX BasePOD (H100) - Enterprise AI Infrastructure Reference Architecture

NVIDIA DGX BasePOD is a proven reference architecture designed to scale AI infrastructure for the enterprise, providing a foundation for building AI Centers of Excellence. It combines high-performance NVIDIA DGX systems with certified storage and... -

NVIDIA - DGX H200 - AI Infrastructure System

The NVIDIA DGX H200 is the premier AI factory infrastructure designed for enterprise-scale generative AI, natural language processing, and deep learning. It integrates eight NVIDIA H200 Tensor Core GPUs with advanced networking and software to provide a... -

NVIDIA - DGX A100 - AI Infrastructure System

The NVIDIA DGX A100 is the universal system for all AI workloads, offering unprecedented compute density, performance, and flexibility in the world's first 5-petaFLOPS AI system. It integrates eight NVIDIA A100 Tensor Core GPUs, providing a unified...